As a team of audio scientists and DSP engineers, Chirp’s mission is to simplify machine-to-machine connectivity using sound. Recognizing the growing need for seamless, low-power and low-cost device-to-device communication in the emerging IoT landscape.

In early 2019, Chirp capitalized on the digital signal processing (DSP) capabilities of the Arm Cortex-M4 and Cortex-M7 processors, to engineer a software-defined data-over-sound solution that is robust and reliable without being resource intensive, enabling connectivity and application logic to reside on a single core. This article explores how data-over-sound can be realized in embedded scenarios, and how this technology can be implemented by third-party engineers in their IoT products.

What is Data-Over-Sound?

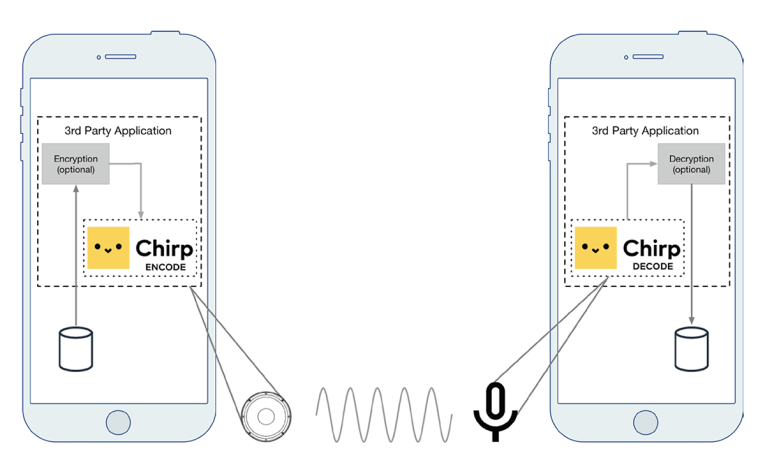

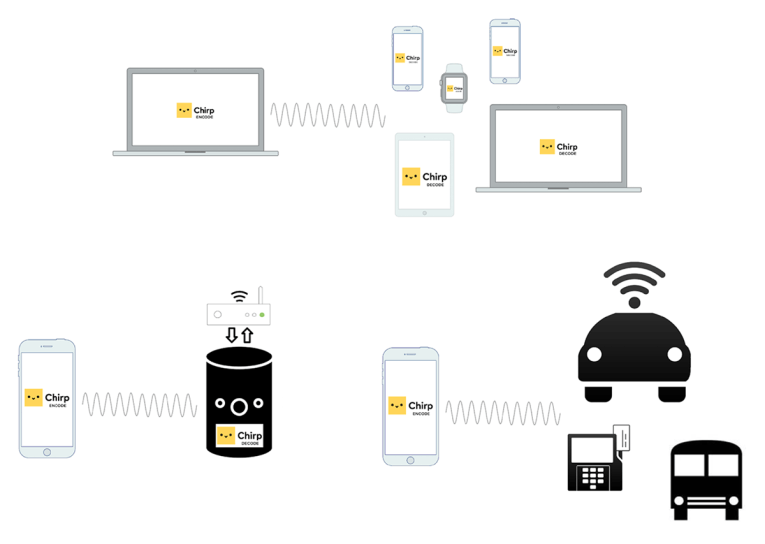

The basic idea of data-over-sound is no more complex than a traditional telephone modem. Data is encoded into an acoustic signal, which is then played through a medium (typically the air, although it could equally be a wired telephone line or VoIP stream), and received and demodulated by a “listening” device. The listening device then decodes the acoustic signal and returns the original data. This process is illustrated in Figure 1.

The Missing Piece of the Connectivity Puzzle

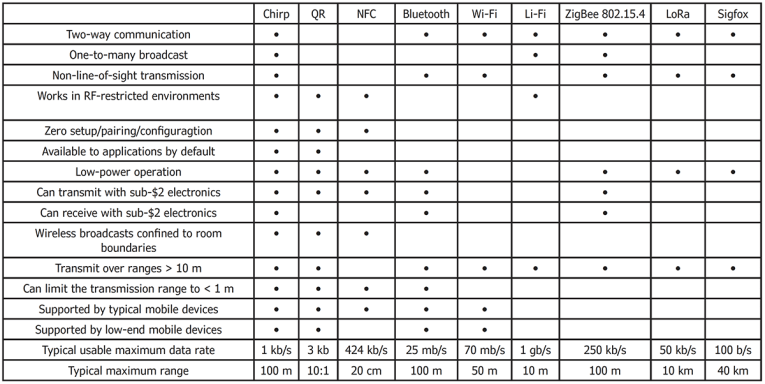

There already exists a plethora of connectivity solutions, including extremely short range (NFC and QR); short range, high bandwidth (Bluetooth and Wi-Fi); long range, low power (Sigfox and LoRa); and short range, low power (Zigbee). Each technology has certain advantages that make it more or less suitable for specific applications. Before we delve into the technicalities of Chirp’s solution, we’ll cover some of the properties of data-over-sound that make it a cost-effective and versatile medium for application connectivity.

As any technology provider in the IoT arena will appreciate, application-specific connectivity needs are not always as simple as choosing a technology based on cost, data rate, and range. Things can get a lot more complicated when one considers the finer details of device support, backward compatibility, user experience, and security. Further detail is introduced by the technical specifications of the protocol, such as the nature of the transfer medium (RF, optical, acoustic), number of required channels, security concerns, or whether two-way and one-to-many communication is needed.

• Device interoperability: The simple hardware requirement of a speaker and/ or microphone make data-over-sound arguably the most wide-reaching wireless communication technology in terms of device compatibility. Mobile phones, voice controlled devices, and any device with an alarm speaker are able to communicate using data-over-sound. This includes many legacy devices. Data can also be transmitted over media channels, such as radio and TV, and over existing telephone lines.

• Frictionless UX: Data-over-sound requires no pairing or configuration, making data transfer as simple as pressing a button.

• Physically bounded: Because sound waves respect room boundaries, particularly in the near-ultrasonic range commonly used in data-over-sound, transmissions do not pass between

neighboring rooms. This means that it can be used for detecting the presence of a device with room-level granularity.

• Low or no bill-of-materials (BOM): Data-over-sound adds nothing to a device BOM if the specification already includes audio I/O, which is becoming increasingly commonplace. If audio I/O is only required for data-over-sound functionality, the BOM is less than $2 for sender and receiver components.

• Zero power: The advent of “wake-on-sound” MEMS microphones (e.g., the Vesper VM1010) enables devices to communicate using data-over-sound, while draining virtually no battery power in between communications (< 10 μA).

Simple to Make, Difficult to Master

So how does it work? Chirp uses Frequency Shift Keying (FSK) for its modulation scheme, due to its robustness to the multipath propagation present in real-world acoustics, compared to schemes such as Phase Shift Keying (PSK) or Amplitude Shift Keying (ASK). For spectral efficiency, Chirp uses an M-ary FSK scheme, encoding input symbols as one of M unique frequencies. Each symbol is modulated by an amplitude envelope to prevent discontinuities, with a guard interval between symbols to reduce the impact of reflections and reverberation at the decoding stage.

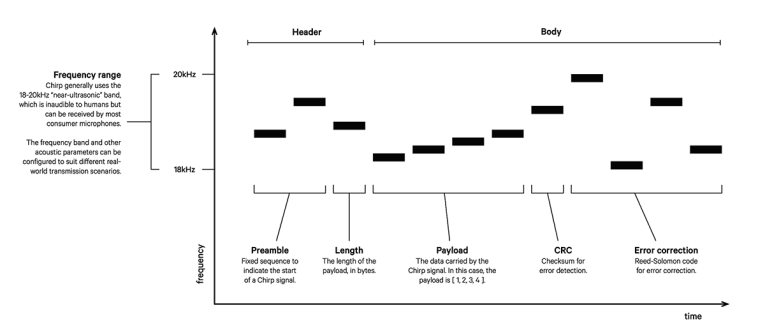

As with other data transmission protocols, the message can include specific sequences to indicate the start and expected length of a message and to establish timing and synchronization, as well as additional bytes for Reed-Solomon Forward Error Correction (FEC) error detection and CRC error detection, as shown in Figure 2. Encoding a Chirp signal in this manner is a lightweight process in terms of memory and CPU, requiring only the generation of error correction symbols and sinusoidal oscillator synthesis.

The encoding process and resultant audio signal is very well understood in the worlds of audio signal processing and communications systems. Given an ideal communication channel (with minimal noise and distortion), the decoding process is as simple as identifying the peak frequencies in the audio stream, and mapping them onto symbols to recover the original message. The robustness of this method using near-ideal channels is probably best demonstrated by the ubiquity of audio FSK systems in the form of the famous telephone modem.

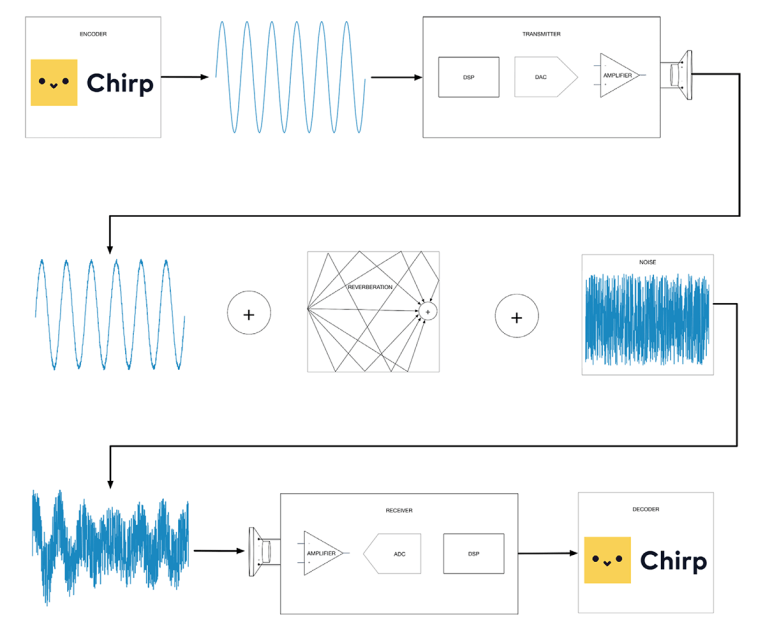

However, in the world of acoustic transmission over the air, things are not so simple. Chirp’s technology is typically used in challenging acoustic environments, with potentially high levels of background noise (e.g., from crowded city streets, busy cafes, or noisy domestic environments containing music, television and people talking over each other). In addition to background interference, we know that audio is particularly susceptible to distortion from the acoustic environment in terms of reverberation, bringing with it issues of resonance, phase cancellation, and comb filtering effects.

If these factors alone are not challenging enough, Chirp prides itself on the ubiquity of its technology—potentially supporting any device with a microphone and/or loudspeaker. Chirp’s SDKs offer support for most platforms, including iOS, Android, Windows, macOS, Web (JS), and embedded boards running Arm processors, along with a dedicated toolkit for Amazon Alexa.

Each device has unique operating characteristics in the audio signal chain, including the frequency and phase responses of the transducers, ADC, DAC, and physical layout (e.g., casing), not to mention the onboard proprietary DSP found on many modern devices. All of these parts have the potential to interfere with and distort the signal during its transmission from sender to receiver, as shown in Figure 3.

At Chirp, seven years of research focus and the resultant IP has enabled us to build robust audio-based communications systems that perform reliably in a wide range of acoustic environments. Of the existing data-over-sound solutions on the market, the main differentiator for Chirp is this reliability and robustness of the internal decoding technology.

data rate, range, and reliability.

So how reliable is Chirp? The short answer is that it depends on the system requirements. There is an inherent trade-off between range, data rate, and reliability (see Figure 4). All of Chirp’s standard profiles are tested to perform at >99% reliability under normal operating conditions. This level of performance can be maintained under particularly challenging acoustic conditions (e.g., with low signal-to-noise ratios and sub-optimal transducers); however, it is ultimately a function of range and data rate. One can expect reliable transmission from 1 cm to 100 m, subject to data rate requirements. This has been demonstrated and stress-tested in acoustically extreme environments, including nuclear power stations with upward of 100 dB(A) of background noise, across ranges exceeding 100 m.

Applications and Use Cases

In response to the connectivity requirements discussed in the previous sections, certain application areas have emerged as the drivers of data-over-sound in IoT contexts, primarily in scenarios in which it significantly reduces friction or human effort (see Figure 5). We will now outline four such areas, where data-over-sound is the only data communication solution that meets all of the connectivity requirements.

IoT Device Provisioning: Configuring a new smart device with network credentials and functional configuration remains a disproportionately complex task, particularly for headless devices. Although technologies such as WPS have emerged to address this challenge, these typically require support on the wireless access point or wider infrastructure or, in some cases, physical access to the AP itself.

Typically this use case requires a solution that offers seamless and rapid setup, with no pairing/configuring, one-to-many transmission (including non-line-of-sight and >10 m range), and no additional hardware requirements on a router. Chirp offers an approach to provisioning that is offline, locally-bounded, and does not require any infrastructure modifications.

Credentials are encoded as audio, optionally layered with cryptography for secure scenarios, and broadcast over-the-air to nearby smart devices. The BOM needed to receive and decode credentials can be as minimal as an Arm Cortex-M4 processor plus a digital MEMS microphone.

Proximity and Presence Detection: This use case requires a one-to-many connectivity solution that respects room boundaries and has universal support for devices. A key property of acoustic signals is that their propagation respects room boundaries, particularly in the near-ultrasonic range. As a consequence, there is a growing uptake for near-ultrasonic acoustic beacons for presence sensing at room-level granularity. Devices can send out sonar-like beacon packets at regular intervals, enabling them to be discovered by nearby peers. Chirp’s implementation uses multi-channel encoding to support multiple devices within hearing range, with carrier sensing to detect free channels.

Two-Way Acoustic NFC: Data-over-sound supports near-field communication scenarios while offering a two-way full-duplex mode of communication, thus addressing a critical limitation of NFC. Enabling two-way data exchange means that devices can perform challenge-and-response dialogues — for example, Diffie-Hellman key exchange for secure financial transactions, or securely sending un-spoofable receipts to a merchant or buyer.

The low-power requirements and low cost of audio components, combined with the fact that data-over-sound is software-defined thus requires no additional hardware, means that acoustic NFC is a powerful emerging approach to peer-to-peer payments and minimal-cost POS devices.

Telemetry in RF-Restricted Environments: In many sensitive environments, RF-based communications are prohibited due to the risk of sparks or interference with equipment that predates RF regulation. In addition, communications systems in these environments can require non line-of-sight two-way communication, with up to 100 m range. Chirp overcomes these limitations, enabling the industrial IoT to harness the benefits of wireless communication without limitations of the EM-spectrum.

Data-Over-Sound on Arm Cortex-M Processors

Chirp has recently developed a data-over-sound SDK for the Arm Cortex-M4 and Cortex-M7 based MCUs. The DSP features on these chips allow for high sample rate audio processing without the need for hardware acceleration, enabling the encoding and decoding of ultrasonic chirps. This places the technology in the hands of device manufacturers and developers who wish to take advantage of a frictionless, low-cost solution for device provisioning, zero-power monitoring, or RF-free telemetry in general.

The suite of Chirp C SDKs are compact but fully featured, allowing developers to use Chirp’s technology even on low-power, memory-constrained devices. The Chirp Arm SDK is optimized for the Cortex-M processors, with support for both the Cortex-M4 and Cortex-M7. It exists as a static library (approximately 740 KiB in size) with a straightforward API for sending and receiving data via Chirp signals.

The SDK implements the physical and data link layers of the data-over-sound OSI stack. Chirp is software-defined, meaning that any additional layers can be easily implemented on top of the SDK if features such as encryption, application-specific protocols, or end-to-end transmission/QoS are required.

Chirp can be incorporated into the software stack for existing projects by integrating the library’s object (.a) and header (.h) files. API calls are available for the following functions:

• Initialization and termination, to allocate and free the main structures of the SDK

• Audio processing interaction, in which arrays of audio samples are exchanged between the audio I/O drivers and the Chirp SDK

• Payload handling, including creation and transmission of data

• Parameter getters and setters for properties such as software volume, SDK states, and internal parameters (e.g., audio sample rate)

• Configuration of user profile and audio transmission properties, for application specific communication protocols

• Debugging helpers, for converting payloads and error codes into human-readable strings

The protocol definitions are handled via a cloud service and associated with specific users/ clients. Protocols are often updated and in some cases users will require bespoke protocols for specific applications. This provides a simple way to update the protocol without having to change any of the code implementations.

Decoding messages requires the processing of relatively large amounts of audio data in a short space of time. Using the CMSIS open-source DSP library, the Chirp Arm SDK maximizes the performance of the Cortex-M processors by using fully optimized instruction sets. In particular, the SIMD instructions allow the Chirp decoder to work on large audio buffers in real time without incurring CPU overruns.

In order to monitor and manage the memory usage, Chirp has developed the Chirp Memory Manager, an open-source library for tracking memory usage in C. This provides a single entry point for allocating and freeing dynamic memory. Using the memory manager, it is possible to track the dynamic allocations and query the current allocated memory at any time in the life cycle of the program.

If there is sufficient headroom in the memory, additional statistics can be calculated and stored during the lifecycle of the program, logging information regarding every allocation and free (relative time, file, function, type allocated, amount of the allocation).

Using the CMSIS library and Chirp Memory Manager, the memory and CPU requirements of the Chirp Arm SDK have been reduced to levels that not only perform within the hardware limitations, but leave ample headroom for additional processes and sophisticated application logic to run alongside Chirp.

The Future of IoT with Data-Over-Sound

By expanding the suite of Chirp SDKs to include the Arm Cortex-M4 and Cortex-M7 processors, Chirp has substantially lowered the barrier to incorporating data-over-sound into key IoT applications.

One of the major threats to the mass adoption of IoT technology is the age-old conflict between performance and price. We are entering an age where people expect even the cheapest smart devices to be able to process real-time audio and perform intelligent digital signal processing (DSP) on the edge device. The Cortex-M series of processors addresses this need by providing an extremely cost-effective solution that is capable of high-performance real-time audio DSP.

This solution presents the fundamental concepts and benefits of data-over-sound connectivity, and explores key application areas within the IoT, including provisioning smart devices and facilitating secure near-field communication in low-cost, low-power scenarios. Moreover, the status of data-over-sound as a pairing-free, one-to-many medium means it addresses some of the pain points of longstanding alternatives such as Bluetooth and Wi-Fi, presenting an attractive general purpose solution for frictionless data transmission.

To learn more about more about Chirp’s SDK for Arm’s Cortex-M processor series, go to https://blog.chirp.io/arm-whitepaper-2. aX

This article was originally published in audioXpress, November 2019.

About the Author

About the AuthorDr Adib Mehrabi is an accomplished research scientist with a background spanning audio engineering, acoustics, DSP, machine learning, and speech processing. He holds a BSc (Hons) in Audio Technology from UWE, Bristol and a PhD in Computer Science from Queen Mary University, London. Adib currently heads up the research efforts at Chirp, where his work focuses on building DSP and AI-based systems for audio processing.