When someone asks which power amplifier or digital-to-analog converter (DAC) they should buy, I usually bring up the importance of measurements, and point out that performance specs published by major manufacturers can generally be trusted. I often add that most modern electronic audio devices are good enough to be audibly transparent. This means they color the sound so little that human ears can’t detect a change, even if test equipment can measure a difference.

This also means that when compared properly, most DACs and power amps — and other modern devices too — sound alike since they impart no coloration of their own onto the audio passing through them. Even when a change to the sound might be heard, audio engineers can usually tell with reasonable certainty what types and amounts of coloration are sufficient to actually harm the listening experience. Ten percent distortion is sure to be noticed, while jitter noise 110 dB below the music will never be heard.

When I post information like this, inevitably someone will counter, “You can’t measure a symphony” or “If most audio devices are transparent, why doesn’t my stereo sound like I’m at a live concert?” Of course, the enjoyment of a symphony or any other music is purely subjective, versus equipment performance that‘s based entirely in science and can be measured precisely.

Obviously nobody can define the worth of a piece of music for anyone but himself. Just because some people dislike opera music doesn’t mean that opera music is bad. Further, musical tastes can change over time. One famous example is the 1913 premier of Igor Stravinsky’s The Rite of Spring — it was so poorly received that the audience actually rioted! Today, it’s considered an important work. Even your own taste can change—it’s common to like a song more after you’ve heard it a few times. But that has nothing to do with the accuracy of a recording or playback system.

However, the second question is more difficult to answer. To be sure, the difference in sound between a venue and your living room is not due to any inaccuracy of your audio electronics. A good professional voltmeter can easily measure a response error or volume change of 0.02 dB, but nobody could possibly hear that.

A Fast Fourier Transform (FFT) display in audio editing software can identify distortion components 130 dB or more below a sine wave test tone, but even the most gifted audiophile can’t possibly hear that. Now, loudspeakers do vary audibly, but that’s caused mostly by response errors that change the frequency balance by some amount. And most speakers ring at least a little at some frequencies. But clearly, the fidelity of your audio devices and speakers is not the reason your hi-fi doesn’t make your living room sound like you’re hearing a concert in Alice Tully Hall.

Acoustics Dominates

The real issue is the vast difference in acoustics. Forget for a moment trying to make your hi-fi sound like you’re listening to music in a venue, and just consider how musical instruments generate sound. One reason a loudspeaker playing a recording of a violin in your living room doesn’t sound like a live violinist playing in your living room has nothing to do with the accuracy of your equipment. The radiation pattern of a loudspeaker is very different from that of a violin. At mid and high frequencies, a typical “box” loudspeaker sends sound mostly forward, which then spreads outward over distance. But starting around 300 Hz and going lower, most loudspeakers become more and more omnidirectional. At 100 Hz sound leaves most speakers in every direction more or less equally.

In contrast, a violin sends different frequencies off in many different directions. High frequencies go straight up off the top plate, low frequencies go downward and off to one side, and so forth. Most of the items in a typical drum set are also highly directional. Therefore, different frequencies reach the walls, the floor, and the ceiling in a manner that’s very different from a loudspeaker playing a recording of the same violin performance. Of course, all those different reflection source locations affect the sound reaching your ears.

When surround speakers are available, mixing engineers can more easily create the sound of a large space by simply delaying the sound to the rear speakers and attenuating higher frequencies. That creates the effect of your rear walls being farther away. In fact, many consumer receivers include various faux environment options just for this purpose. (Though I never enable those effects!) Indeed, most movie sound tracks already contain whatever embedded surround ambience and delays the producers and engineers deem desirable. However, for this to be truly convincing, the room must be well treated to prevent the inevitable early room reflections from contaminating and diluting the effect. Sadly, many hi-fi listening rooms and home theaters have no acoustic treatment at all.

Electronic instruments such as synthesizers and electric guitars can often be made to sound like they’re playing live in your room. The original source for an electric guitar is the loudspeaker in a guitar amplifier. So if you play an accurate recording of a guitar amp through your hi-fi, it can sound pretty much like the guitar amp was in the room with you at that same location. (Or at some location midway between the left and right stereo speakers.)

However, this requires the recording microphone to have been very close to the guitar amp’s own speaker to avoid picking up ambience from the room in which it was being recorded. This is how electric guitars are usually recorded anyway. Or a guitar can be recorded direct, with no amplifier, through an “amp-sim” device that simulates the tone and intentional distortion of a real guitar amplifier. I’ve done this many times and I know it works. This is especially common when recording electric basses, which are usually captured through an electrical feed rather than using a microphone with a bass amp. Sometimes a microphone is used, depending on the style of music, but a direct feed is almost always recorded too. So an electric bass can also sound pretty much the same as having a bass amp in your living room.

As explained earlier, achieving this sort of realism is difficult with acoustic instruments because of their varied radiation patterns versus frequency. But there’s another related problem: Most acoustic instruments emit different sounds from different places. This is not the same as different frequencies leaving in different directions. For example, some of the sound of a clarinet emits from the finger holes along the length of the body, and some comes out the main “bore” opening at the far end. The frequency spectrum is different at those locations, so a clarinet’s characteristic sound is really the summation of those frequencies. A microphone placed about a foot above the instrument, and slightly out in front, can usually capture the full complement of frequencies.

But a cello or string bass is much larger than a clarinet, so you can’t place a microphone close enough to avoid room tone because there’s no one place that contains the full range of frequencies these instruments generate. If you put a microphone near the “f” holes, you’ll get a bassy sound lacking clarity. If you put it near the bridge, the sound captured will be very thin. A microphone placed near the 12th fret of an acoustic guitar captures a very different sound than when it’s near the sound hole.

Likewise for a grand piano, which is even larger. It’s true that high-quality studio recordings are often made by placing mics very close to the piano strings. Especially for popular music and modern jazz. But those recordings don’t actually sound like a piano is physically in your room when played back through loudspeakers. Often the sound is even fuller and clearer! I’ll have more to say about that in a moment.

This is why good recordings are made in large rooms, whether in a concert hall or a professional recording studio. A large room lets you put the microphones far enough away to capture the full range of frequencies from the instrument, without sounding boxy and off-mic as happens when recording in someone’s untreated bedroom. The back wall of every auditorium stage is reflective, and it’s usually curved or angled to focus and project sound exiting the rear of an orchestra back out to the audience in front. This also helps the players hear each other better. A curved or angled “roof” is often placed above the stage, again to ensure that all the sound energy generated at all frequencies gets out to the audience seats.

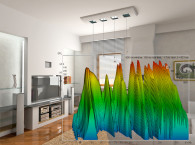

This is also why home recording studios need to be well treated acoustically. In fact, the smaller the room, the more dead sounding it needs to be for both recording and mixing. Acoustic treatment makes a room more neutral sounding by avoiding the coloration known as comb filtering that manifests as a series of response peaks and deep nulls. With acoustic treatment to absorb the early reflections, you can pull the microphones back far enough from an instrument to capture its complete range of frequencies, without also picking up the small-room ambience that exists in all untreated home size rooms.

Better Than Real Life

In my 70 years, I’ve been to Carnegie Hall, and I’ve heard the National Symphony Orchestra perform at Kennedy Center. I’ve been to dozens of other venues both large and small to hear all types of music. In my opinion, a good recording can and should sound better than being at a live concert! Aside from not hearing people cough or shush their children, and being able to press Pause to grab a snack, I think recordings should sound larger than reality, and closer than a seat 20’ to 30’ from the stage in a concert hall.

In an educational video sponsored by plug-in maker Waves, black belt mixing engineer Alan Meyerson shows many of the techniques he used when mixing the score for the movie Wonder Woman to make the orchestra sound larger, clearer, and just plain better than reality.

As explained in my “Early Reflections” article, when music is played back in a small untreated room, the strong early reflections from nearby surfaces drown out the larger sounding reverb already present in the recording. This makes the music sound smaller and narrower, not larger and wider as many people believe. This is precisely why all excellent hi-fi rooms and home theaters have plenty of acoustic treatment, especially absorption.

It’s important to mention that sound stage and imaging have nothing to do with electronics. There’s nothing a preamp, wire, or so forth can do to affect imaging, though frequency response changes can affect apparent depth slightly, with less treble making instruments and voices sound farther back. The width and depth of stereo placement, and imaging effects, are mostly a function of echoes and reverb in the original source recording.

Untamed reflections in your own listening room can only damage imaging. Further, contrary to popular belief stereo width can be measured. The device that does this is called a Phase Correlation Meter, and it shows the amount and phase of left-right channel differences. Recording studios use this to verify mono compatibility, to ensure that nothing in a stereo recording will be lost if the two channels are reduced to mono.

Indeed, it’s clear that acoustic treatment is the most important thing you can have to achieve realism in a home listening environment. It gets you closest to the spacious sound of headphones, while enjoying the benefits of loudspeakers. In my well-treated stereo/home theater room, cars and traffic sounds on TV and in movies often sound like a car is in my driveway or out on the road. When a cat meows in a movie, I often have to look over to see if it was one of my own cats! And good orchestra recordings sound much more like “being there” than in any room that lacks the basic reflection control described here.

Indeed, acoustic treatment is one of the last things many audiophiles consider, yet it’s the one thing that truly will make their systems sound more real and lifelike. And then they might finally be satisfied and cease the endless, futile cycle of “upgrading” their audio gear! aX

This article was originally published in audioXpress, August 2019.

Resources

G. Behler, “Musical Instrument Directivity Database for Simulation and Auralization,” The Journal of the Acoustical Society, Volume 140, Issue 4, November 2016,

https://asa.scitation.org/doi/abs/10.1121/1.4969977

“Mixing the Wonder Woman Film Score – Masterclass with Alan Meyerson,” Waves Audio, June 2018,

www.youtube.com/watch?v=h9jZqn5lnw4

Y. Nishimura, N. Yasui, and S. Nishimura, “A Consideration on the Sound Radiation Pattern of Violin,” International Journal of Emerging Engineering Research and Technology, Volume 4, Issue 2, February 2016,

www.ijeert.org/pdf/v4-i2/4.pdf

L. M. Wang and C. B. Burroughs, “Directivity Patterns of Acoustic Radiation from Bowed Violins,” Digital Commons @University of Nebraska-Lincoln,

http://digitalcommons.unl.edu/cgi/viewcontent.cgi?article=1065&context=archengfacpub

E. Winer, “Audiophoolery,”

http://ethanwiner.com/audiophoolery.html

E. Winer, “RealTraps - Recording Spaces,” EQ Magazine, June 2004,

http://realtraps.com/art_spaces.htm

E. Winer, “Early Reflections Are Not Beneficial,”

http://ethanwiner.com/early_reflections.htm