According to Facebook, the new 360 audio technology is able to maintain high-quality spatial audio throughout the pipeline — from editor to user — possible for large-scale consumption for the first time. "The sound quality remains high because we support what we describe as hybrid higher-order ambisonics, an 8-channel system with rendering optimizations to incorporate the quality of higher-order ambisonics with fewer channels, ultimately saving on bandwidth," explain Hans Fugal and Varun Nair, two of the engineers involved in the Facebook project.

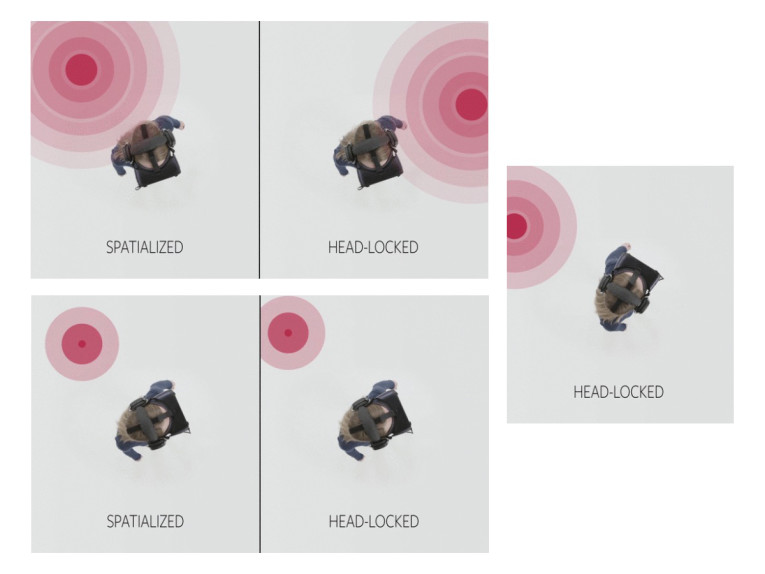

"Our audio system supports spatial audio and head-locked audio simultaneously. In spatialized audio the system reacts to which way a person's head is turned in the 360 experience when hearing sounds coming from the scene. With head-locked audio, things like narration and music stay static. Rendering with hybrid higher-order ambisonics and head-locked audio simultaneously is a first for the industry."

The 360 audio technology presentation focused on the fact that the spatial audio renderer supports real-time experiences with less than half a millisecond of latency. The FB360 Encoder tool exports to multiple platforms. The Rendering SDK is integrated across Facebook and Oculus Video, which guarantees a unified experience from production to publication. That saves time and ensures that what users hear in production is what will be published.

The company published a detailed description of the new FB360 spatial audio encoding and rendering technology on a blog post. Of course, they also state there's still lots of work to do, including defining a video file format that can store all audio in one track, possibly exploring adaptive bitrate and adaptive channel layout "to improve the experience for people with limited bandwidth, or enough bandwidth to receive even higher quality."

See the blog post here.